Science & Technology _|_ Issue 6, 2017

The Century of Complexity

Dr Vasileios Basios talks to Jane Clark and Michael Cohen about new ideas in science

The Century of Complexity

Dr Vasileios Basios talks to Jane Clark and Michael Cohen about new ideas in science

In 2000, Stephen Hawking, in response to a question about the way that science is developing, replied: “I think the next century will be the century of complexity”. He was pointing towards the increasing importance of a set of scientific ideas that emerged in the 1980s and 90s that allow us to find order within highly complex, interconnected systems such as the weather, traffic movement, the stock market and the ecosystem. As the 21st century unfolds, Hawking’s prediction looks more and more prescient. The development of ever more powerful high-speed computing capacity and graphics means that what has become known as ‘Complexity Theory’ is finding application in a huge range of areas, from social networks and global financial systems to the workings of the brain and the immune system, and even the fundamental processes of genetic reproduction.

In 2000, Stephen Hawking, in response to a question about the way that science is developing, replied: “I think the next century will be the century of complexity”. He was pointing towards the increasing importance of a set of scientific ideas that emerged in the 1980s and 90s that allow us to find order within highly complex, interconnected systems such as the weather, traffic movement, the stock market and the ecosystem. As the 21st century unfolds, Hawking’s prediction looks more and more prescient. The development of ever more powerful high-speed computing capacity and graphics means that what has become known as ‘Complexity Theory’ is finding application in a huge range of areas, from social networks and global financial systems to the workings of the brain and the immune system, and even the fundamental processes of genetic reproduction.

Dr Vasileios Basios is at the heart of these developments; he worked with one of the great figures of the 20th century, the Nobel laureate Ilya Prigogine, and is now a senior researcher at The Physics of Complex Systems Department of the University of Brussels. We talked to him about some of the fundamental insights of this new science, and why he sees it as not only a precursor of a fundamental shift in our understanding of nature, but also as a fundamental shift in the very nature of our understanding.

Michael: Can you tell us first about how the study of complexity theory arose – the history of it, in general terms?

Vasileios: Actually, it was not until about 1990 that the term “complexity” started to be used. Before that, it was usually referred to as “chaos theory”. It is important to understand that the scientific term “chaos” has nothing to do with chaos as we understand it in philosophy, like the primordial chaos of Hesiod’s poem “The Theogony” [/]. What we call “chaotic motion” was first identified around 1900 by the great Scottish physicist James Clerk Maxwell [/] and the renowned French mathematician Henri Poincaré [/]. It captures the idea that a system is highly sensitive to, and dependent on, its initial conditions and/or parameters. Nowadays this is famously known as “the butterfly effect”. It means that very tiny differences in the starting point can eventually lead to totally different manifestations of the dynamics – that is, in the way the system develops. So such systems are inherently unpredictable.

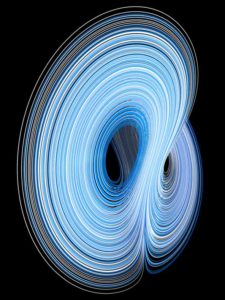

Light trace of a double pendulum. See https://www.youtube.com/watch?v=QXf95_EKS6E

You can see this very clearly when we look at the behaviour of something like a double pendulum. A single pendulum exhibits classic, linear motion around an equilibrium position, but a double pendulum is a complex system (see video right or below).

This idea was radical in 1900, because at the time there was the naïve idea that if we could fully understand the way that the parts of a system interacted, we would be able to predict how the system as whole would evolve. So the idea that predictability means determinism and determinism means predictability was shaken by the first investigations of Maxwell and Poincaré independently.

Jane: I believe that according to current knowledge, it is literally impossible to absolutely determine the starting condition of any system.

Vasileios: In the beginning, in the time of Maxwell and Poincaré, it was reasonable to think that we could do it. So it was not conceived as a limitation. But now we have learnt that there are inherent limitations mathematically. For example, we cannot pin down an irrational number, like π, with total accuracy because we need an infinite number of decimal places to completely express it. So even if we use many decimal places, there will always be an error and we can never exactly replicate the starting point.

In a fully chaotic system, like turbulence, the growth of the initial error is exponential. So you can calculate on the back of an envelope that after fifty or sixty collisions of a molecule in turbulent flow – not an organised flow – then all memory of the initial condition is lost!

Jane: But nevertheless, as far as I understand it, we are not left with a completely random system. Within the apparent ‘chaos’, there are underlying patterns and things like feedback loops, etc. As you yourself said in a lecture [/] you gave recently: “chaotic systems are stably unstable”.

Vasileios: Yes. To understand that we have to know a little bit about statistical mechanics. We don’t know what one little butterfly will do, but when you put all the individual interactions together, you get some surprises, because such a system might be very stable in a statistical sense. So we can know, for example, that out of 10,000 initial conditions with the butterfly effect, 99% of them will go in one direction and 1% will disperse, and this means that you can make statistical predictions.

Michael: Where does this statistical view fit in the development of complexity theory?

Vasileios: This is the aspect by which complexity developed from physical chemistry and thermodynamics. Thermodynamics and statistical dynamics were the brainchild of the industrial revolution. They were established by people like Maxwell and Boltzmann as they tried to understand the transformation of energy. What emerged from their work, in the mid-19th century, was the prediction of what is called “the heat death of the universe”. In a closed thermodynamic system, which at that time the scientific community believed the universe to be, the second law of thermodynamics tells us that everything will eventually become a kind of ‘dead soup’. Nothing will evolve further, and entropy – which is a measure of disorder in a scientific system – will increase and everything will ‘die’ in the end.

Now this view was challenged in the 20th century by many people. Amongst them were Théophile de Donder [/], a student of Poincaré who for many years, until 1942, was professor here at the Université Libre de Bruxelles, and his student, Ilya Prigogine [/], who taught me as well as many, many others.

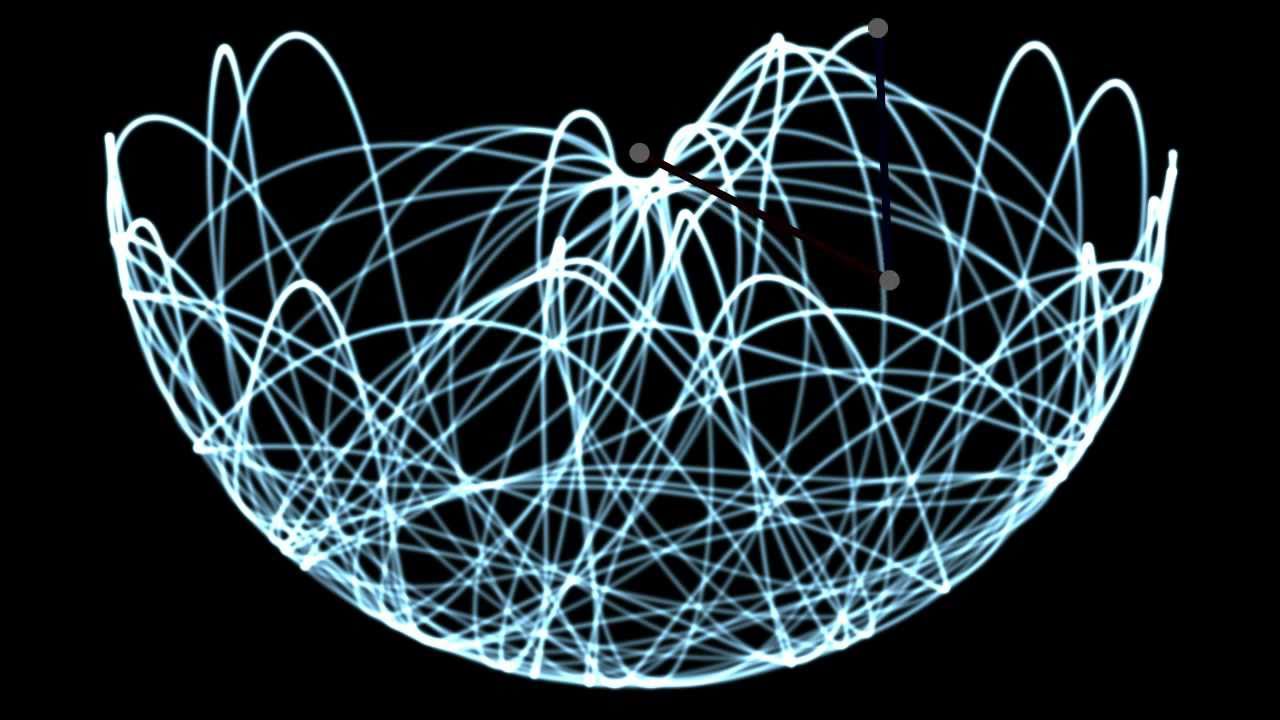

Ilya Prigogine in 1977, the year he received the Nobel Prize. Photograph: Keystone Pictures, USA/Alamy Stock Photo

Prigogine did something which for me is an example of non-linear thinking itself. He approached the problem of the heat death of the universe by thinking out of the box and attacking it in its premises, not in its outcome. He accepted that if you have a closed system, it will eventually suffer “heat death”. But what if the system is not closed? What then happens with entropy? He went on to express this in a series of theorems, which are quite celebrated these days. They show that in open systems, it is not the entropy itself that matters, but the production of entropy. And eventually he arrived at the idea of “dissipative structures”, for which he won the Nobel Prize in 1977.

Dissipative systems are open to their environment, and they exchange information and matter with it. They are not in what was classically regarded as thermodynamic equilibrium; rather they are exemplars of systems far from equilibrium, but nevertheless, they exhibit novel steady states. Therefore, the role of chaotic attractors [/] is essential for them. The solar system is an open system because the sun is constantly inputting energy into it. And living beings can be seen as dissipative structures because they exchange information, energy and matter with their environment and with each other.

Jane: So understanding these dissipative structures is really the heart of complexity theory?

Vasileios: Yes. Prigogine developed these theorems in the mid-twentieth century, and his students René Lefever and Gregoire Nicolis then took them further. Gregoire Nicolis is, among his many scientific abilities, a master of catastrophe theory, which is a branch of mathematics proposed by René Thom. These days it is called “non-linear dynamics”, and Lefever and Nicolis used this tool to prove that these dissipative structures are real and can be modelled mathematically.

They also showed that they can be related to chemical reactions. There was one particular class of chemical reactions that had been discovered, called the Belousov-Zhabotinsky reaction, which no one had been able to understand, mainly because it is ‘oscillating’ – that is, it switches backwards and forwards between two different states, which is not meant to happen in chemical reactions, which are meant to be irreversible. But in this reaction, you can actually see the liquid changing from red to blue and then back to red again.

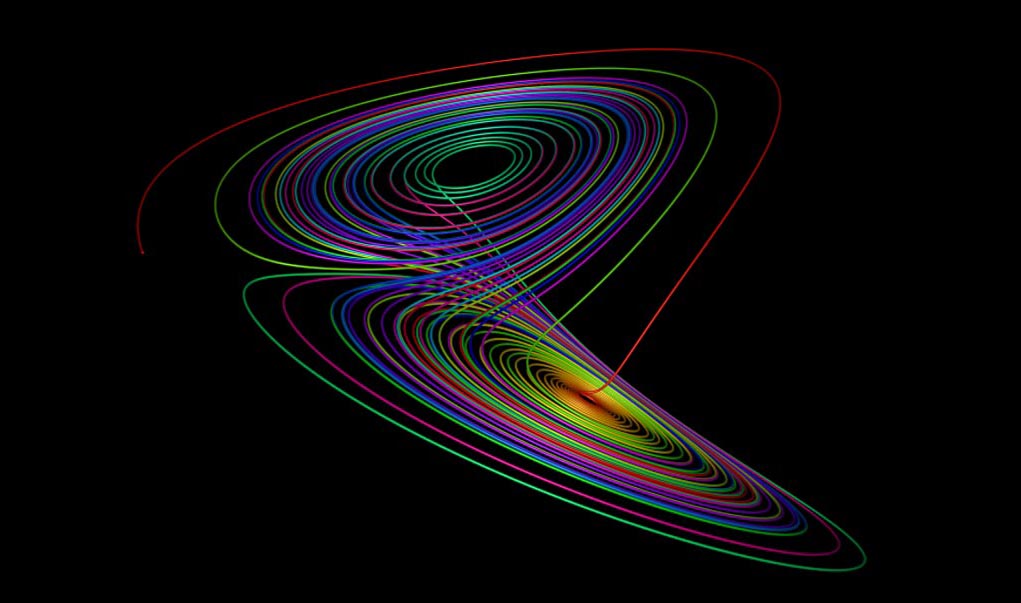

Lefever and Nicolis explained this undeservedly forgotten and hidden class of oscillating chemical reactions, showing that these systems are engaged in a kind of chaotic ‘game’ whereby they are in equilibrium in one sense and disequilibrium in another. There is co-existence of equilibrium and disequilibrium, and this manifests in the formation of coherent patterns on the surface of the liquid (see video right or below).

Video: Belousov-Zhabotinsky Reaction. 3:36 minutes

Michael: So each small part of the reaction is unstable, but together they make a stable whole.

Vasileios: Yes. The individual atoms are in a state of molecular chaos, but the overall patterns that emerge are stable – although in each experiment they are never exactly the same, because they depend on the defining boundary, for example, and other constraints. So this gives us a different kind of understanding of stability which is not local, but global.

Patterns generated in a Belousov-Zhabotinsky reaction. Photo from: https://debanupf.wordpress.com/author/debanupf/

Modern Complexity Theory

.

Jane: So in the story of complexity theory, we first of all have the work of Poincaré; after which Prigogine showed that these open systems really exist; then Nicolis explained oscillating reactions. And together, they established the foundation of a new science.

Vasileios: The next stage happened at the end of the last century, and concerned events which took place here in Brussels. People at first argued against Prigogine’s ideas, maintaining that what he was talking about were not really stable patterns but just transient phenomena. However, he would answer that we are all transient phenomena. Why should we care about eternity? We should care about transient phenomena, such as we are as living beings. So there was a big battle which settled down in the 80s in favour of the ideas of Prigogine and his co-workers. It was around that time that people started building research institutes to understand chaotic dynamics. In the States, there were two or three, for example, at the California Institute of Technology and Santa Fe. In Europe there was Prigogine’s group working here in Brussels, and in the UK, a centre at Warwick University, and one in Aberdeen. These days there are many more, and we are witnessing a proliferation of complexity research centres all over the world.

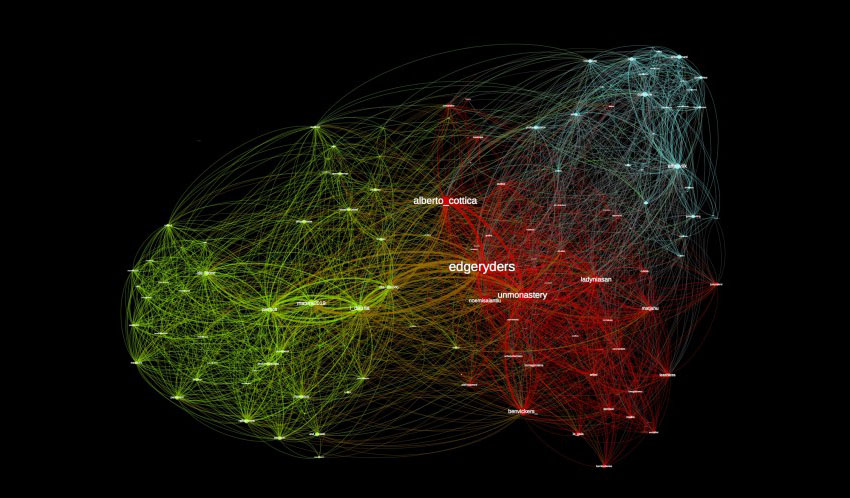

This was all given further impetus when the physicist Stephen Hawking famously declared that the 21st century would be “the century of complexity”. Recently there has been an explosion of complex systems research, for example, in “network theory”. Everything seems to be drawn up as a network – mainly because we are now in an information age and we have phenomena such as Facebook and Twitter which demand an understanding of complex communities.

Michael: So how would we now define a complex system?

Vasileios: It is a system which comprises many entities in non-trivial relations. That is the main definition; many entities interrelated by non-linear interactions.

Michael: Could you explain what is meant by a “non-linear interaction”.

Vasileios: One way of understanding it is that if cause and effect are linked in a linear way, small changes result in small effects and large causes result in large effects. But in non-linear interactions, the effect of two things, A and B interacting, is not just A + B; it is more than the sum of the parts. Usually non-linear interactions involve a feedback system.

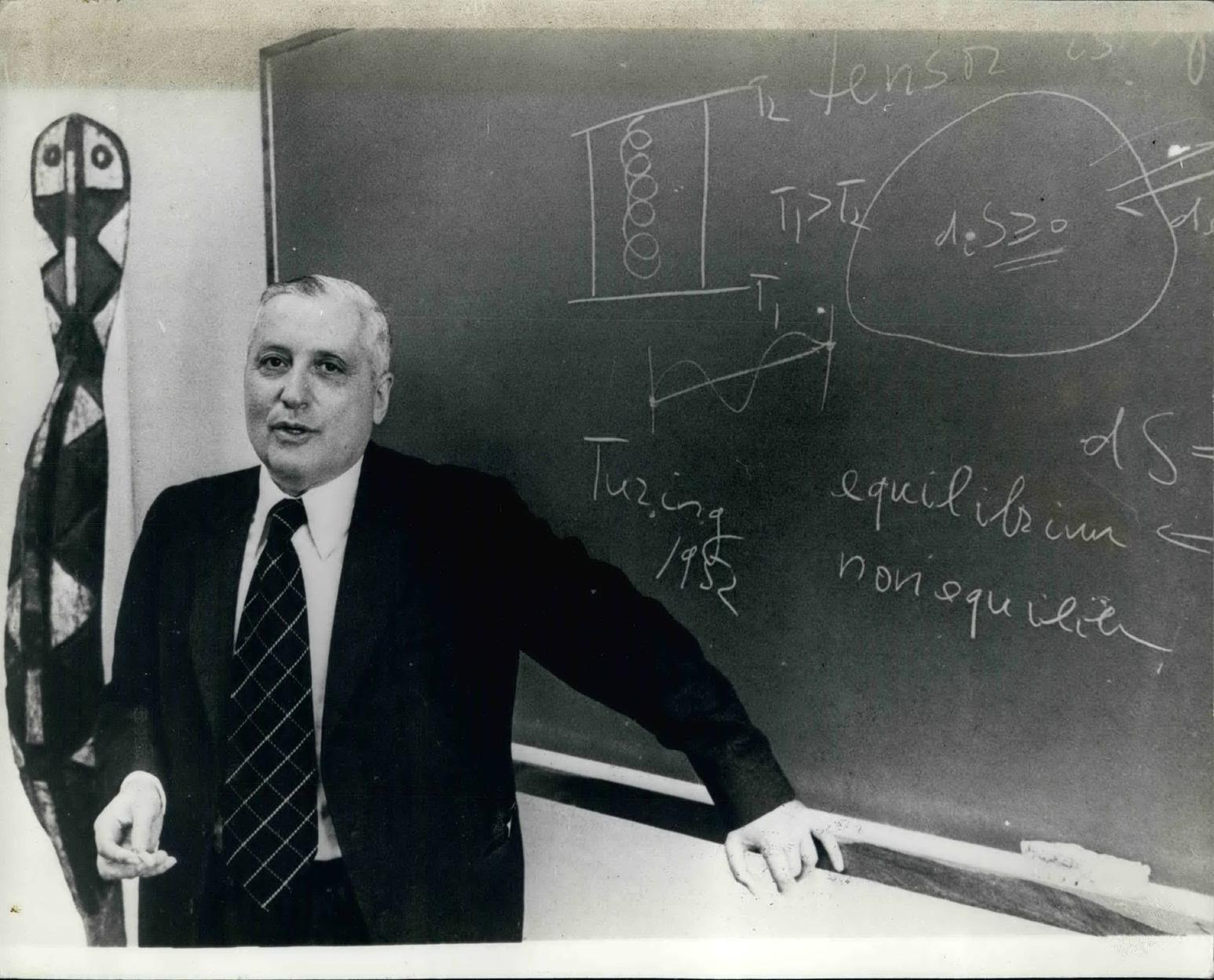

A Lorenz Attractor in creation. Image from paulbourke.net [/]

Jane: Hence the famous remark by Lorenz [/] that the flap of a butterfly’s wing can cause a tornado in Texas.

Vasileios: Exactly, and moreover, this interaction between the parts of a system was precisely what statistical mechanics was trying to understand. It looked at individual atoms interacting in quite simple ways in networks which were nothing like as complex as those we are trying to understand now. But we know that even when the interactions between the individual components are simple, it’s not so simple to understand what’s going on in the system overall. The prevailing idea which constituted the major research plan in the 19th century was to reduce everything to the behaviour of parts, but that approach has either failed or reached its limits.

Jane: Could you give us some examples of the type of systems that are now being studied? You mentioned social networks.

Vasileios: There are many possible examples, but social networks, and other types of complex network, are perhaps the best known at the moment. For instance, there was a study done recently by Duncan Watts and Stephen Strogatz [/], which repeated a social experiment done in the 1960s by Stanley Milgram, at which time it was conducted with physical messages in envelopes. The idea was to send out a chain mail, and monitor when and how it would come back to you. So each participant would be assigned the task of contacting someone that they did not know in a distant country – say, in Japan. They did not have the address, but they might have a friend of a friend who worked in the Embassy. So they sent it to that person, and they in turn passed it on to someone else, etc. The surprising result of these experiments is that the ‘chains’ of connections are very short – five or seven links. As a result “six degrees of connection” has become a famous phrase associated with these experiments. Nowadays these are called ‘small-world networks’ because they give us the familiar feeling of “Wow! It’s a small world!”.

The topology of different social networks

From this experiment, which was published in 1998, has sprung this new “network science”, which can be seen as a collaboration between physics and social science. Out of it have come some completely new concepts for physics; for example, the idea that you have to look at the distribution of these networks, and their topology – that is, the way that the parts are interrelated.

So now we know that networks can have different and distinctive topologies. You can have a military type of topology, where there is a chain of command coming from the person at the top, which has a very strict hierarchy and is expressed in a very ordered lattice. Or you can have a random network, in which there is no particular rule that determines the links between neighbouring participants, such as you might get in a crowd. In a small world network, we find, on the one hand, that there are “hubs” or “nodes”, who are people through whom many connections are made – celebrities, or politicians, or doctors and priests who meet a lot people in a community. And on the other hand, we find that the majority of people have few acquaintances. So there is a mixture of a few people who have many connections – the “hubs of the network” – and many people who have few connections, who just know their friends and their neighbours, for example. Practically, these networks allow us to understand better how things spread through communities – infectious diseases, for instance.

Jane: Of course, the complex system that we all immediately think of is the weather.

Vasileios: Yes. With weather we also have the existence of all kinds of physical nodes and hubs, and the interactions between the different components are in general well defined. There are also constraints such as local terrain – mountains, for instance – which are extremely important, and which we can also know with quite a degree of accuracy. Even so, although we may know every little detail of these things, in the end the weather itself is unpredictable – after a “horizon of predictability”, we always reach a limit of certainty. The accuracy of our predictions has actually got much better in recent years and we now have a pretty good idea of the weather for the next five years, and this is because we have been able to build these limits of predictability into our measurements. So knowing what you cannot know is an advantage in knowledge.

A murmuration of starlings near Gretna Green, Scotland. Photograph: greatonmywall/Alamy Stock Photo

Other examples of complex systems are: cells and intercellular interactions; the brain; traffic; crowds, and crowd management; the flight of birds like starlings (see video right or below).

Video: Flight of the Starlings. 2:00 minutes

And of course, ecology; there are many complex systems in ecology, and here we would say that there were pioneers who were there before us – people like James Lovelock, who postulated the Gaia Hypothesis [/]. Lovelock was not part of the complexity crowd, but he did something which is very fundamental for complexity science.

Jane: I understand that a new area of growth is with financial systems.

Vasileios: Financial institutions like banks are interested in prediction. If they can predict the stock market, they will make more money. The financial system is a non-linear system in which the individual components are mutually dependent, and so complexity theory has a number of tools which are helpful – just as they are in predicting the weather.

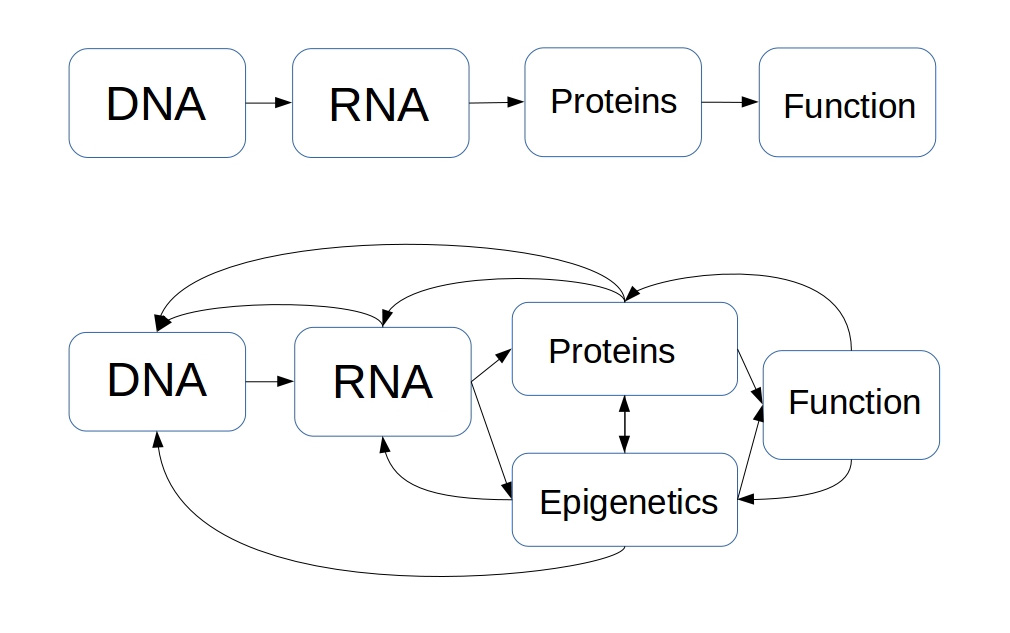

Perhaps the most interesting area of application right now is biology. This has arisen out of ‘the blunder of DNA’, if I may speak of it in this way. You know that in the 1990s we set out to map the entire human genome and we invested a lot of money on the understanding that we were going to discover the fundamentals of biology – the alphabet of life, if you like. But there is another, previously rather neglected, area of biology called “epigenetics”, which tells us that there can be stable hereditary traits that do not involve modification of the DNA. Epigenetics is basically the science of how genes are expressed and proteins produced. This is very complex in terms of DNA-RNA-protein production.

There used to be a ‘central dogma’, which was a hierarchical model in which, like a general, DNA orders the making of RNA, then RNA orders the making of proteins. But now, as the late scientist Richard Strohman [/] said: “We know that DNA makes RNA make protein whenever that happens.” So he is telling us that sometimes RNA makes proteins, but sometimes the proteins interfere with the RNA, or with the signals from the environment, and so on. So what we really have here is a classic feedback situation – i.e. a non-linear interaction – and now it has become standard practice for people to produce epigenetic models which essentially use complexity theory, and these are challenging the central dogma.

DNA-RNA-protein interactions. Above: the “central dogma”. Below: current understanding

Jane: Why do you call it “the blunder” of DNA?

Vasileios: Because it seems to me that in general the Human Genome project failed at an epistemological, or even metaphysical, level in aiming to find this alphabet of life. Simple organisms are much more complex than they were willing to acknowledge; for example, there is very little genetic difference between human beings and a mouse, but the difference in the way the two species operate is quite large. So this means that there must be much more complex processes determining biological function than a simple DNA-RNA-proteins chain.

I think that Richard Strohman’s ideas are very important in this area. He wrote a paper in 1997 called ‘The coming Kuhnian revolution in biology” in which he maintains that we are going through a change of paradigm – a change of world view – which can be likened to the Copernican revolution in cosmology when we changed from a geocentric to a heliocentric view. In this case, the change is moving away from the notion that we are defined or determined by our DNA. Strohman was in some ways a maverick and a heretic, known for making some statements about AIDS which upset a lot of people, but on the subject of the Human Genome project, he predicted that it would be a failure and it was. I think even at a financial level, we got very little back from our investment in terms of new drugs or cures for diseases.

Living systems and consciousness

.

Michael: You have said that complex systems bridge the gap between the living and the dead. Is this because, as we have already mentioned, complex systems are like living beings because they have the capacity for self-organisation?

Vasileios: If we go back to the ancient world, Aristotle envisaged a hierarchy of beings, and at the bottom he placed things which are inanimate, which means that they cannot move. So the first people on earth made a fundamental distinction between things which are animated and can move, and therefore have an animus or a soul, and beings which cannot move. But now we know that there are many things that arise from non-living things which have the properties of life – like self-organisation and pattern formation, or morphogenesis (the way that an organism develops its own shape) and homeostasis (the ability of a system to maintain its own internal environment).

At the moment, it is very fashionable to talk about artificial intelligence (AI) – things which have been fashioned by human beings, or are part of another intellect which is not human. I find it fascinating to consider whether mind needs a substratum to exist, and if it does, what kind of a substratum it needs in order to manifest. This is a bit ‘far-out’ for ordinary science, and people working in AI are more daring in these matters. I am not concerned with AI as such, but if we are going to consider matters of consciousness, then I think we have to come back to considering the ancient concept of the “great chain of being”– that somehow consciousness manifests itself from the rock to the plant to the human being.

Michael: This is very much the point of view of the neo-Platonists, and in fact, mystical philosophers such as Ibn ʿArabī [/]. Ibn ʿArabī regards everything as living, and within the mystical traditions, it is said that people who have fully realised their own consciousness can understand the speech of inanimate things.

Vasileios: I believe that all the great mystical traditions would agree with this – and this brings us back to science, because science can help people to reach these degrees and open their minds to the possibility of such experiences. Science cannot of course give them the experience, but it can help people understand how these things can be. Discussion about this kind of thing may be seen as taboo at certain levels of scientific understanding, but real scientists are always driven by the mystery of existence. And sometimes in the ‘high echelons’ for very successful scientists like Prigogine or others, it is OK to talk about the ‘big questions’ of science and philosophy or even metaphysics.

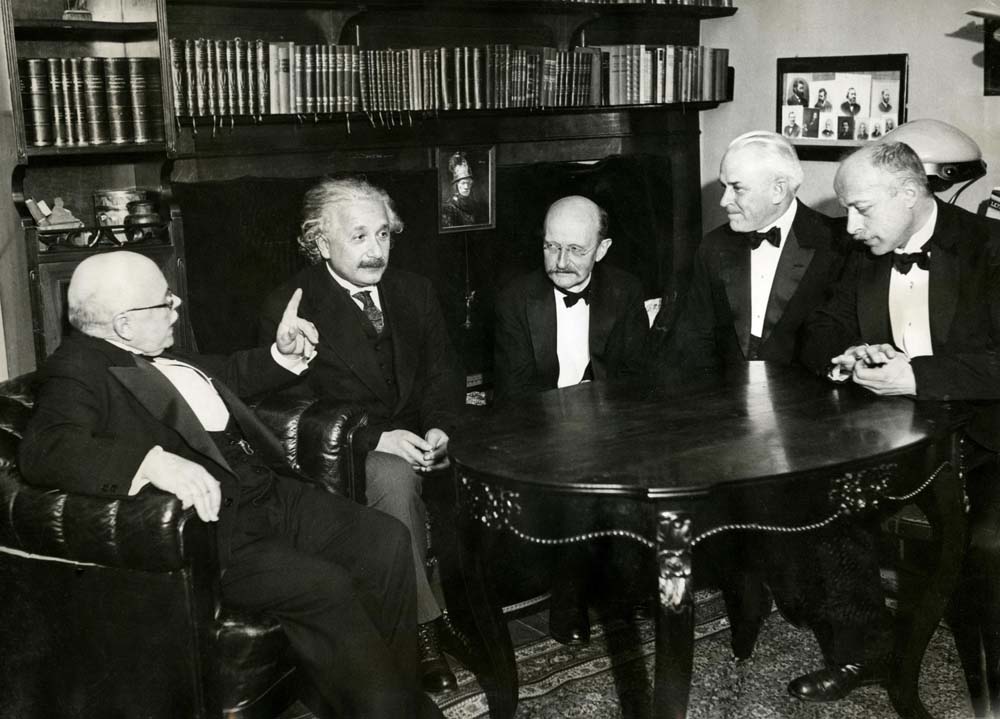

One example is the great quantum physicist Max Planck, who is one of the founders of modern science, and has a great many laboratories named after him. He said:

I regard consciousness as fundamental; matter is derivative from consciousness. We cannot get behind consciousness. Everything we talk about, everything that we regard as existing, postulates consciousness.

So he saw consciousness not as an epiphenomenon of the brain, but a fundamental reality behind the matrix of reality – meaning, behind the reality of everything we encounter. That was his own conviction, but I think that there are very few people working in these laboratories who would know that, or ponder upon such things, nowadays.

Max Planck (centre) with (his right) Albert Einstein and Walther Nernst, and (his left) Robert Andrews Millikan, and Max Laue, all physicists and winners of the Nobel Prize, Berlin, 1928. Photographer unknown via Wikimedia Commons

Michael: You have said various other things over the years about the philosophical implications of complexity theory. For instance, you have said that it heralds the end of one-way reductionist science, calling it: “a radically new kind of science which deals with a multi-faceted reality and reveals the world as an interconnected organic whole.”

Vasileios: I guess nobody is against reductionism as a tool; but we are against reductionism as a master. It is a nice servant, but it cannot define our goals, or our survival. Reductionism as an “-ism” is not just about the act of reducing things to their constituent parts, because when you reduce something down you can be aware of what you are doing and where you started. But with reductionism, the aim and purpose is reducing everything to little pieces and to forget where you started.

Alvin Toffler expressed it nicely in his foreword to Prigogine’s book Order Out of Chaos which he wrote with Isabelle Stengers:

One of the most highly developed skills in contemporary Western civilisation is dissection: the split-up of problems into their smaller possible components. We are good at it. So good, we often forget to put the pieces back together again.

Michael: But do you not think that the technology we have developed from reductionism has been a remarkable success?

Vasileios: Yes, indeed, of course it has been. But as Francisco-Javier Varela [/] has put it: “to do this [reduce to parts] on purpose is quite useful; to forget that we did so is quite dangerous”. What we tend to we forget is that in our technology, we have not only broken things down into their parts, but also managed to put them back together. When you build a successful helicopter or a smartphone, it is not only a reduction to Newtonian forces but also a synthesis; as in art, there is a creative vision behind it.

One fascinating question around at the moment is whether technology is a specifically human activity, because other species also build things. We of course know that ants and bees have a technology, but now we also know that microbes – microcellular organisms – build bridges out of calcium; they make sponges, and so on. Even our bodies are full of technology; for example, the construction of filters like our kidneys, etc.

Michael: You have also said that complexity is science which has to “reflect on its own foundations”.

Vasileios: I think that complexity is not unique in teaching us this lesson. Almost all the great scientists and revolutionaries of science (Poincaré and Maxwell included) reflected upon their own methodologies. In terms of recent developments, I think we are forgetting to reflect upon the basic principles of science. It is often said that the attitude at the moment is basically: “shut up and calculate”. Quantum mechanics is full of paradoxes; the interactions being uncovered in the high-powered colliders are extremely complex; there is mind-boggling work going on in biology with the discovery of the human genome, etc. And so the whole impetus at the moment in “big science” is on computation; there are hundreds or probably thousands of scientists involved in this. But we are not allowing ourselves to go back and investigate our foundational premises.

If you look at recent Nobel Prizes, a lot of them after about 1980 have not been awarded for involvement in fundamental work; it is all about major technological application. This is not to say that the winners have not done great science, but it is not as it used to be. There are of course exceptions, but the major trend is to support the convenient – things that are useful – and not to ask how we can investigate the grey areas of science – the unanswered questions.

Michael: This is a very natural human tendency, don’t you think; to be a bit of afraid of the unknown and to want to stay on familiar territory?

Vasileios: Yes, but scientists have to be trained and educated to do their job – and selected. It is a bit like training mountaineers; you have to select people who actually want to climb the mountain even though there is some danger. In science the danger is intellectual – you might propose a theory that is wrong and be ridiculed, or you might search and find nothing – and so you have to have a reward system which encourages a bit of risk taking and is not just about making money and having a successful career.

Mapping of a Twitter storm [/]; a contemporary application of complexity theory by Alberto Cottica

Our Global Future

.

Jane: One of the things which has engaged me about complex networks which involve human beings, is that for them to work effectively, the individuals have to have a sense of agency, and a degree of consciousness and self-awareness. This is in stark contrast to mechanistic models, where the individual is just seen as an inert thing to be manipulated.

Vasileios: It is the sense of agency in the individual which gives the system its openness – that makes it an open system. By “a sense of agency”, we mean that the individual has free will; they collect information from their surroundings, and they feed that information back into the whole system after they have processed it. So it is not a simple mechanical thing – in the processing of the information there is a kind of inherent freedom which needs to be taken into account in the calculations.

This freedom can lead to good, bad or neutral outcomes. When dealing with crowds, for example, you can reduce the number of variables to binary decisions; a crowd can do good things or bad things, and we know that they can behave horribly sometimes. As to whether a crowd will become a kind of intelligent entity in itself, here complexity theory can help. We deal with this by proposing that there are different levels of awareness, and consequently you can perhaps affect whether the decision is good or bad.

Jane: So can we apply this to a government at a higher level? We are becoming a global society now, which means that we are all part of a complex network, or several complex networks.

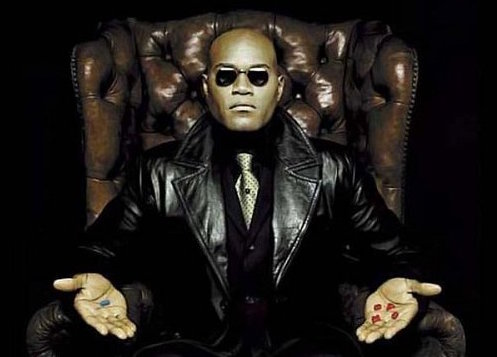

Vasileios: It would be possible to organise a global culture in a hierarchical way – like an Orwellian state – in which everything is organised from above. Or we could have a Matrix-like system, which uses human beings like batteries. Or we could have an open-ended complex system of free individuals. But one thing that is clear, is that even within the dystopic visions, there is going to be a ‘crack’ by which the system remains to some degree open; in Orwell, the crack was love; in the Matrix, it was knowledge.

Another thing that seems clear is that, purely from the point of view of complexity theory, we are approaching a bifurcation point in our economic and political/societal systems. This is evident when we take the observable distribution of wealth. There is a well-known distribution called the Pareto Distribution [/] which expresses the way that resources are generally distributed through a society. It states that 80% of the wealth is usually owned by 20% of the population, and vice versa; 20% of the wealth is distributed across 80% of the population. This does not seem to be very just or democratic, but it is a natural distribution which is found not only within our societies, but it – or something very similar – describes the food distribution within a community of ants, or a hive of bees, or the distribution of size amongst grains of sand. In many kinds of natural system, we find this power law.

If we were in the situation described by the Pareto Distribution, we would feel as if we were in paradise. It is a very stable condition which is sustainable across centuries. But now, we are in a situation globally where the distribution of wealth has become much more extreme; at the moment, it is something like 99.9% of the wealth being owned by 0.1% of the population, and it is becoming more polarised all the time. This is not stable, and it is a sign of a system which is being driven towards a bifurcation point – that is, a point at which some major kind of change has to take place and a choice made, whether that is conscious or unconscious, planned or spontaneous. But there is no way of telling right now whether we will transition to a utopia or a dystopia. As Fritjof Capra has said: a bifurcation can be a breakthrough, or a breakdown.

Jane: So is there any hint of a time scale for this bifurcation point?

Vasileios: Unfortunately not. But the good news about bifurcation points is that at the moment at which they occur, all the components of a system are active and interconnected all at the same time. So small things can have a really big effect. This means that the actions we all take now – the networks we set up, the relationships we maintain – could tip the balance. As does the extent to which we stand in our humanity, as free individuals, because this keeps the system open. In this, I find some hope.

Still from the 1999 film The Matrix. Morpheus says to Neo: “You take the blue pill, the story ends. You wake up in your bed and believe whatever you want to believe. You take the red pill, you stay in wonderland, and I show you how deep the rabbit hole goes”

Jane: So finally, coming back to our main subject, how do you see the science of complexity developing in the future?

Vasileios: It is very important to remember that complexity theory is not over yet; it is really just beginning. If science is going to be developed with the aim of understanding what goes on in the universe, then I feel that complexity will find a place as a separate, over-arching, inter-disciplinary science. But if science is just going to be the servant of technology, and not a separate way of understanding, then I am less hopeful. Complexity theory merits being set up as an independent form of investigation, with its own concepts, funding, etc. But if science is just dedicated to utilitarian ends, real scientific enquiry will be pushed to the periphery of our culture.

I think that what is really needed is to help and support once more ‘curiosity driven research’ in many generous ways and via different channels, especially for complex systems. Then the rewards will be plenty and prosperous. All great innovations come out of the playful curiosity of the human mind and its need to understand. We have to kindle this light again in any way we can.

Image Sources (click to open)

Banner picture: Dr Vasileios Basios at the Department of the Physics of Complex Systems in Brussels. Photograph by Jane Clark.

First insert picture: Lorenz attractor embodying “the butterfly effect” Photo: https://imaginary.org/gallery/the-lorenz-attractor

Other Sources (click to open)

STEPHEN HAWKING: “I think this will be the century of complexity…” in San Jose News, 23rd January, 2000.

VASILEIOS BASIOS: “The Charm of Complexity Science”, lecture given at Imperial College, London in November 2014, organised by the Scientific and Medical Network. It can be viewed on https://www.youtube.com/watch?v=9qEEkXygztM

D. J. WATTS and S. H. STROGATZ: “The Collective Dynamics of Small-world Networks”, in Nature, Vol. 393, 1998, p. 440.

RICHARD C. STROHMANN: “The coming Kuhnian revolution in biology”, in Nature Biotechnology, Volume 15, March 1997.

MAX PLANCK: “I regard consciousness as fundamental…”, in The Observer, 25th January 1931.

ILYA PRIGOGINE and ISABELLE STENGERS, Order Out of Chaos, Flamingo 1993 (reissue).

ILYA PRIGOGINE: “The Rediscovery of Time”, in Beshara Magazine, Issue 9, 1989.

Email this page to a friend

FOLLOW AND LIKE US

——————————————

——————————————

——————————————

Video: Belousov-Zhabotinsky Reaction. 3:36 minutes

Video: Flight of the Starlings. 2:00 minutes

FOLLOW AND LIKE US

If you enjoyed reading this article

Please leave a comment below.

Please also consider making a donation to support the work of Beshara Magazine. The magazine relies entirely on voluntary support. Donations received through this website go towards editorial expenses, eg. image rights, travel expenses, and website maintenance and development costs.

READERS’ COMMENTS

14 Comments

Trackbacks/Pingbacks

- The Future Prospects For Humanity | Beshara Magazine - […] The Century of Complexity […]

- Book of Kells | Beshara Magazine - […] The Century of Complexity […]

- Maintaining a Radical Edge | Beshara Magazine - […] The Century of Complexity […]

Brilliant article bringing us up to date on this fascinating and essential science. Glad to know we are ‘open systems’ Thank you. Couple of points

(a) Not sure that what you say about weather prediction is correct – I always though it was 5 days not 5 years ahead!

(b) Great to have picture from the Matrix – and very interesting points made about love & knowledge in this context. I think the caption to the photo should be extended as not everybody will know the reference.

Richard: thank you very much for these kind words. Your query about weather predictions led me to listen to the original recording of the interview, and Vasileios does indeed refer to a 5 year period. I think he is referring to general trends rather than any sort of detailed prediction. This interests me enough to take it further so I shall ask Vasileios if he could say more about it.

Simply want to say your article is as surprising.

The clarity for your post is just nice and that i could assume you are an expert on this subject.

Fine along with your permission allow me to grab your RSS feed to stay updated with imminent post.

Thank you one million and please continue the rewarding work.

I couldn’t resist commenting. Well written!

Wonderful work! This is the type of info that are meant to be shared around the web.

Disgrace on the seek engines for no longer positioning this put up upper!

Come on over and talk over with my website . Thank you =)

I for all time emailed this website post page to all my friends,

for the reason that if like to read it then my friends will too.

I read this article fully concerning the comparison of latest

and previous technologies, it’s amazing article.

I like this website so much, saved to fav.

Nice post. I used to be checking constantly this blog and I’m inspired!

Very helpful information particularly the ultimate phase 🙂 I take care of such information much.

I used to be looking for this certain information for a very long time.

Thanks and best of luck.

Hello.This article was really motivating, especially since

I was investigating for thoughts on this issue last

Wednesday.

I needed to thank you for this wonderful read!! I absolutely

loved every little bit of it. I have you book marked to

check out new stuff you post?

thank you very much, very useful article.

Great interview.

excellent article a very broad vision of the science of complexity